Add Fin to your app with the Fin Agent API

Embed Fin directly into your product with full control over the UI. No model integration or agent building required.

View documentation

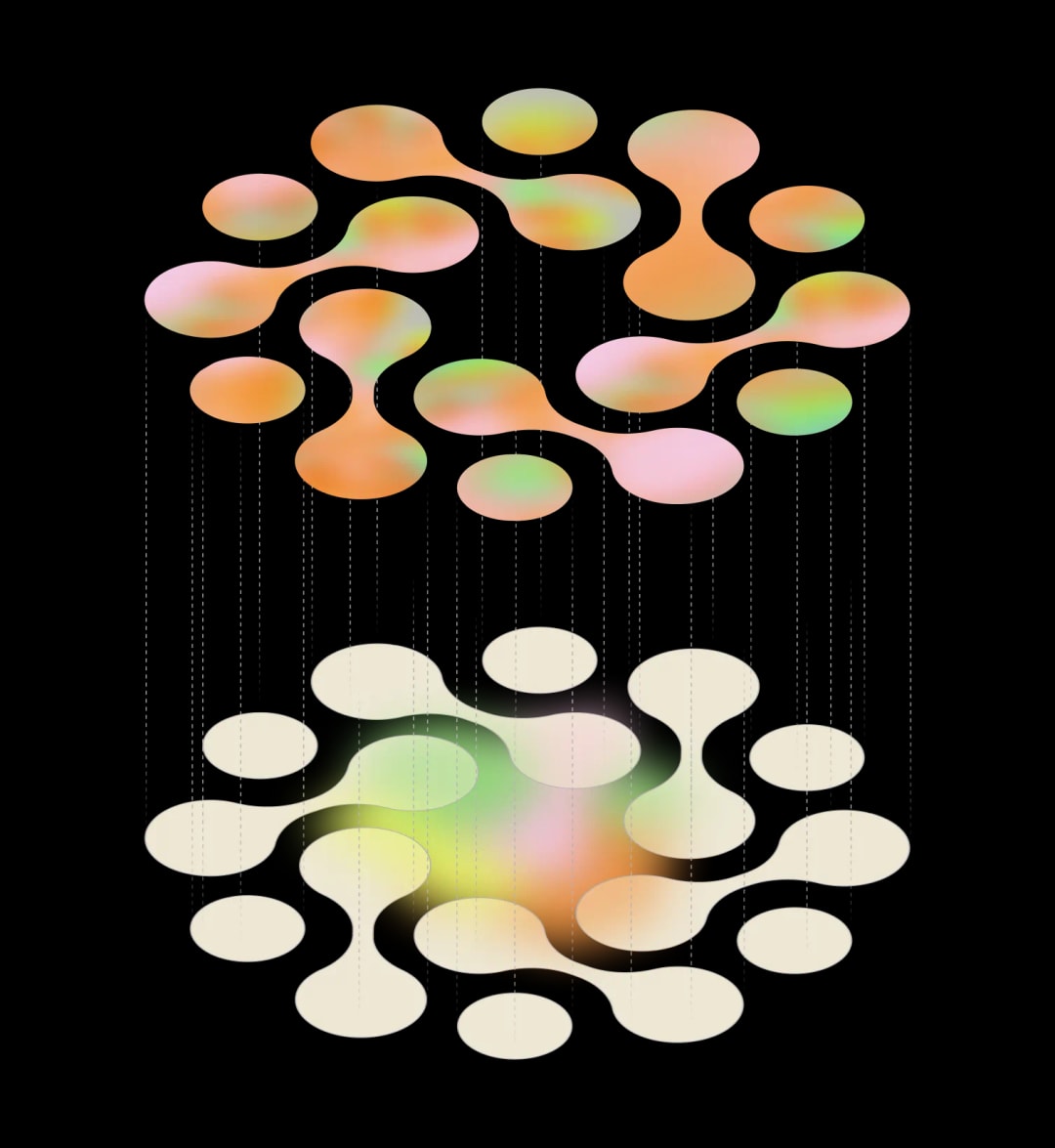

The Fin API platform includes APIs for answer generation and knowledge retrieval, available as a set or independently.

Access the generative model at the core of Fin. Fin Apex is the best-performing customer service LLM, outperforming GPT-5.4 and Opus 4.5 on resolution rate, hallucination, and speed.

Access Fin's unified retrieval-augmented generation pipeline over API, including Fin Apex 1.0, Fin Retrieval, and Fin Reranker models.

A custom retrieval model optimised for customer service use cases, with a carefully optimised tradeoff between efficiency and quality, proven at the largest production scales.

Use the heavy reranker powering Fin to sort candidate items by relevance, optimised for your LLMs context window.

01Build your own custom agents powered by the best models for customer service. Use Fin's retrieval, reranking, and generative models to enhance your existing agents or build new ones from scratch.

02Build vertical-specific agents for industries like hospitality, healthcare, and logistics, powered by the best-performing models for customer service.

Insights and blogs from our 60-person AI group building Fin at Intercom.

Embed Fin directly into your product with full control over the UI. No model integration or agent building required.

View documentation